PSO Algorithm

This section describes the PSO algorithm.

The Particle Swarm Optimization (PSO) algorithm, initially used to simulate the social behavior of bird flocks or fish schools, is a stochastic optimization technique based on collective collaboration. It has been widely applied to address various optimization issues, including nanophotonics design . The main purpose of the PSO algorithm is to find the optimal solution through collaboration and information sharing among individuals within the swarm.

Basic Principles of PSO Algorithm

In the process of seeking the optimal solution based on the PSO algorithm, each particle in the swarm exhibits both individual and collective behavior. They not only learn from the flight experience of their companions but also leverage their own flight experience to contribute to the search for the optimal solution. Each particle learns from two values: individual historical best value pbest and swarm-based global best value gbest. Particles adjust their velocity and position based on these two values, and the quality of each position is determined by the fitness value. The fitness function serves as the objective function for optimization.

Particle and Velocity Initialization

In a D-dimensional objective search space, there is a particle swarm consisting of N particles. Each particle is represented by a D-dimensional vector, and its spatial position can be expressed as:

xi=(xi1,xi2,...,xiD),i=1,2,3,...,N

The spatial position of each particle in the swarm provides a solution to the objective optimization problem. By substituting it into the fitness function, the fitness value can be calculated and then used to measure the quality of the particle.

The flight velocity of the ith particle is also represented as a D-dimensional vector, which is expressed as:

vi=(vi1,vi2,...,viD),i=1,2,3,...,N

The average values of the positions and velocities of the particles are randomly generated within a specified range.

Individual Historical Best Value and Global Best Value

The position associated with the best fitness value experienced by the ith particle is referred to as its individual historical optimal position, which is expressed as:

pbesti=(pbesti1,pbesti2,...,pbestiD),i=1,2,3,...,N

The position associated with the best fitness value experienced by the entire particle swarm is referred to as the global optimal position, which is expressed as:

gbesti=(gbesti1,gbesti2,...,gbestiD),i=1,2,3,...,N

Particle Swarm Velocity and Position Update

For a particle swarm, the position update operation can be expressed by the velocity update formula and the position update formula.

The velocity update formula is:

vij(t+1)=vij(t)+c1r1(pbestij(t)−xij(t))+c2r2(gbestj(t)−xij(t))

The position update formula is:

xij(t+1)=xij(t)+vij(t+1)

Wherein, the subscript j represents the j-th dimension of a particle; the subscript i represents the ith particle; t represents the current iteration count; c1 and c2 represent the acceleration constants, typically taken within the range of (0,2); r1 and r2 represent independent random numbers, typically taken within the range of [0,1]. As shown in the above formulas, the constants c1 and c2 indicate that particles, through the combination of learning from individuals and the swarm, can leverage both individual and collective search experiences for their benefit.

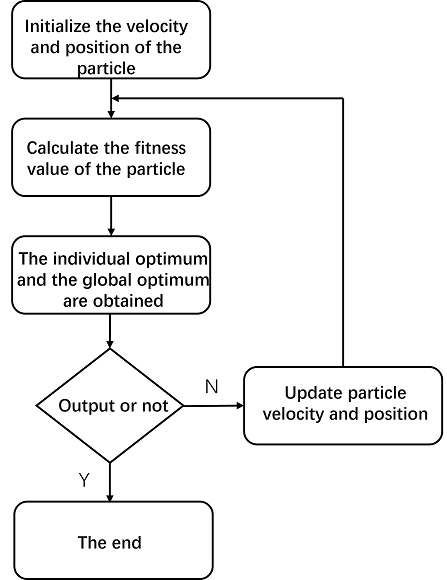

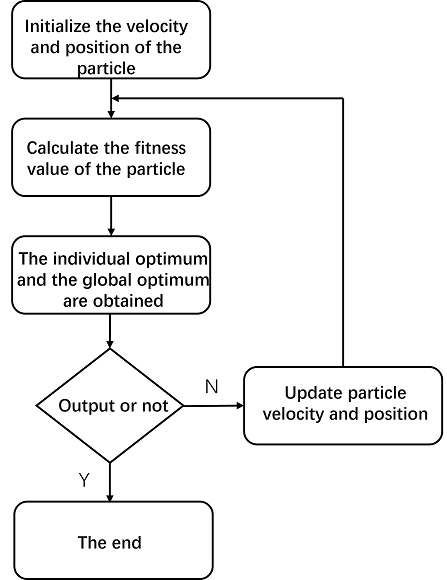

PSO Algorithm Process

The PSO algorithm is executed in the following steps:

- Initialize the particle swarm parameters c1 and c2, set the boundary ranges of position and velocity, and initialize the velocities and positions of the particle swarm;

- Calculate the objective values based on the objective function, and record each historical best value pbest and the global best value gbest respectively;

- Update the velocities of the particle swarm using the velocity update formula, and constrain any velocities that exceed the boundary;

- Update the position of the particle swarm using the position update formula, and constrain any positions that exceed the boundary;

- Calculate the new objective values based on the objective function;

- Compare each particle's new objective value with its historical best objective value, and take the former as the new historical best value pbest if it is better;

- Compare each particle's new objective value with the global best objective value achieved by the swarm, and take the former as the new global best value gbest if it is better;

- Determine whether the termination condition is met (the maximum number of iterations is reached). If so, output the optimal position. Otherwise, repeat Steps 3-8.

References

Enterprise WeChat

Enterprise WeChat WeChat Service Account

WeChat Service Account